Overview

YouTube is expanding its deepfake detection tools to include politicians and journalists, reinforcing the platform’s stance that synthetic media poses tangible risks to public discourse and democratic processes. The move comes as AI-generated videos become more accessible and persuasive, intensifying calls for greater platform responsibility, clearer regulatory benchmarks, and safer information ecosystems ahead of elections and major policy debates in 2026.

What Just Happened

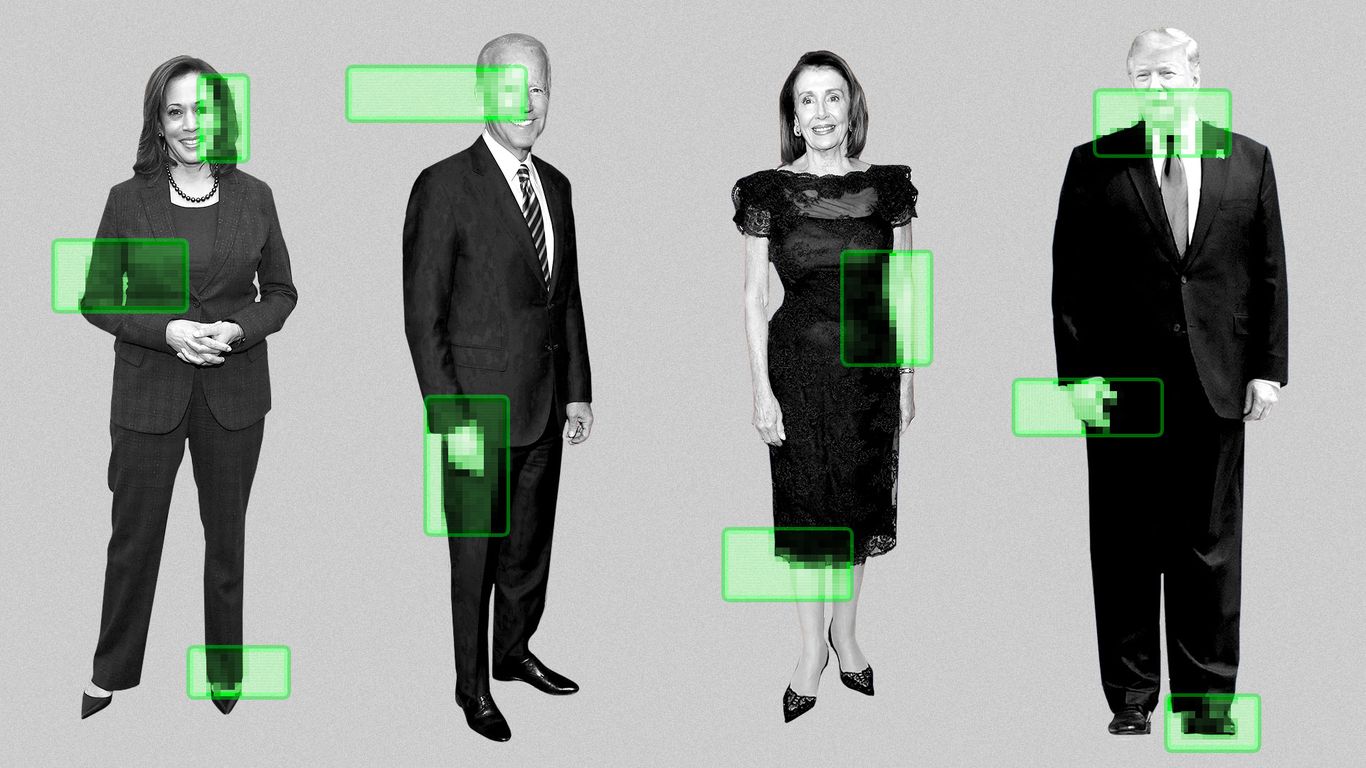

The tech platform announced an enhancement to its existing deepfake detection framework, extending coverage to high-profile public figures such as political leaders and widely recognized media professionals. This expansion aims to identify manipulated videos with higher confidence, flag suspicious content, and provide viewers with contextual information about authenticity. The development aligns with a broader push by social platforms to curb misinformation while balancing free expression and innovation in AI-assisted media creation.

Policy Snapshot

- Platform safeguards: Expanded detection capabilities, improved watermarking or provenance signals, and clearer labeling for AI-generated content.

- Verification tools: Potential integration with fact-checking partners and transparency features that explain why a video was flagged.

- Standards alignment: Efforts to harmonize detection criteria with evolving regulatory expectations around misinformation, election integrity, and digital governance.

Who Is Affected

The updated toolset targets creators and disseminators of political content, including elected officials, bureaucratic spokespeople, and journalists who frequently publish public-interest material. It also affects viewers, who will gain easier access to veracity indicators that help them discern authentic footage from synthetic media. For political actors, this raises considerations about messaging strategy, media risk management, and compliance with platform rules regarding disinformation.

Economic or Regulatory Impact

- Platform compliance costs: Enhanced detection and labeling workflows require ongoing investment in AI models, moderation staffing, and user interface improvements.

- Regulation dynamics: The expansion dovetails with regulatory discussions around AI accountability, transparency in synthetic media, and potential requirements for platform-level disclosures in political content.

- Market signal: By prioritizing deepfake detection for prominent public figures, YouTube signals a defensive posture toward misinformation while enabling advertisers and publishers to operate in a more predictable environment.

Political Response

Policymakers, watchdog groups, and media organizations are likely to scrutinize the tool’s effectiveness, transparency, and potential biases. Advocates for stronger election safeguards may push for formal regulatory standards that require robust synthetic media detection, independent audits, and clear consumer disclosures. Critics could question the scope of coverage, potential overreach, or the risk of censorship in the name of “defense against deception.”

What Comes Next

- Evaluation and audits: Expect independent evaluations of detection accuracy, false positives/negatives, and user experience.

- Regulatory dialogue: Continued discussions on AI governance frameworks, including platform accountability for political content and the role of detectors in safeguarding electoral integrity.

- Innovations in transparency: Ongoing enhancements to labeling, provenance metadata, and user-facing explanations to improve trust without stifling legitimate political commentary.

Tone and Implications

The move reflects a pragmatic, future-facing approach to AI governance in the media landscape. By targeting politicians and journalists, YouTube acknowledges the heightened stakes of synthetic media in public life and the need for credible signals of authenticity. For 2026, this signals a more mature, regulated digital information environment where platform tools, policymakers, and civil society collaborate to preserve democratic discourse while supporting innovation in AI-enabled communication.